I’m working on a case study with one of my customers that I think you’ll be interested in. I’m just beginning to put it together now, but I thought you’d appreciate a sneak preview. I’ll let you know when the final article is ready. (Update: Open this great case study in a new window)

Last fall this customer came to us with a sizeable integration and customization project. It came at a time when the financial and manufacturing world seemed to be falling down around us. I was, frankly, surprised that they wanted to spend that kind of money at the same time that banks and investment firms were collapsing, the stock market was imploding, and businesses were shedding employees like autumn leaves.

But we worked with him through our standard process of defining the project and formalizing a Statement of Work. We launched the project right around the new year. During that process, my customer agreed to meet with me in six months to do a post-mortem on the project. He said he’d be willing to open his books so we could evaluate – objectively – whether the project was paying for itself.

We finally got together last month – seven months after we finished our deployment. True to his word, he did open his books to me and demonstrated – with CFO-approved numbers – that he had paid for the initial investment in less than three months.

Many organizations look for a two-year payback. He had achieved his in an eighth of that time.

Now, seven months into the project, he had documented an ROI of 171%.

That got my attention.

We started by reviewing the work we had done with his team. This was a truly collaborative effort. His engineers had done an exceptionally fine job of building the foundation for the project, and then worked with my staff to implement the solution. Together they did a fantastic job of automating and integrating a variety of work flows and data systems. The result was a streamlined process for tracking repair and rework processes across multiple departments.

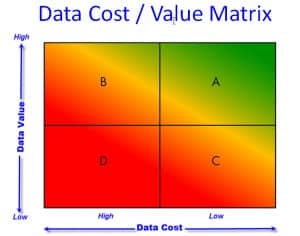

It was the classic tactic of “reduce the cost of data”. I knew that going into the debriefing meeting. And I expected that the ROI would be based on the efficiencies gained by eliminating islands of data, removing duplicate data entry, and integrating disparate data systems. I expected that he paid for the project by eliminating staff (I knew the company was going through a downsizing concurrent with our project) through automation. Clearly we were helping this customer move laterally on the Data Cost / Value Matrix from expensive data to low cost data.

As we dove into the data, I found a number of surprises.

First, he didn’t eliminate any jobs because of this project. As he reduced rework he reassigned the rework staff to more productive activities. They shifted from non-value-added status (overhead) to value-added production staff.

Second, reducing the cost of the data contributed only about 2% to the ROI. It was such a puny number. I had expected reducing the cost of the data would account for maybe 50% or 60% of the cost savings.

The lion’s share of the ROI came from improved throughput. Cheaper, more reliable, and more accessible data enabled his staff to drive defects out of the process. Reducing defects increased first pass yield. This resulted in lower WIP (work in process), faster product delivery cycle times, and improved order to cash cycle times.

How are you looking at ROI? Do you ever understate (as I was tempted to do) the benefit you get from the value of the data? Use the ShareThis button below to mark this page, leave a comment, tweet me, schedule a conversation, or call 800-958-2709.

2 Comments

Comments are closed.

[…] The Data Heads « The value of cheaper data… […]

[…] in August I gave a sneak preview of a new case study that I was working on. Yesterday I finally completed it and published it on our […]